How formal methods helped AWS to design amazing services

11 min read, last updated on 2020-12-15

Today I would like to share an exciting story that I have discovered when preparing for one of my AWS certification exams. I have found a service called AWS Zelkova, which is a part of a provable security initiative, and according to the tagline, it aims into:

Security Assurance, Backed by Mathematical Proof

Provable Security on AWS

If you are interested in Zelkova itself, here is an explainer video.

However, I started thinking: is it the only example of using formal methods on the AWS side? 🤔 It turns out it is not.

Before we talk about particular services, let’s start with an introduction, what formal methods are, and what AWS used in their design process.

What are the Formal Methods?

In computer science, specifically software engineering and hardware engineering, formal methods are a particular kind of mathematically rigorous techniques for the specification, development, and verification of software and hardware systems.

Wikipedia: Formal Methods

For many people, it sounds boring and scary at the same thing, but I have started digging, and I suppose you are intrigued as well as you are reading this.

From that extensive list posted in the Wikipedia entry, I have recognized a couple of entries, but my attention was drawn to TLA+.

I knew this name. A few years earlier, I have read about TLA and TLA+ inside the “Principles of Concurrent and Distributed Programming” book by Mordechai Ben-Ari. One search away was an article on an official TLA+ website on how AWS used it internally to design services.

What is TLA+?

According to its definition, TLA+ is a high-level language for modeling programs and systems - mostly concurrent and distributed ones. It’s based on the idea that the best way to describe things precisely is with pure mathematics. TLA+ and its tools are useful for eliminating fundamental design errors, which are hard to find and expensive to correct in code.

The official name of the language is TLA+, with the + a superscript. That’s the way it should appear in print. However, some media doesn’t cope well with superscripts, so it’s written as TLA+.

TLA+ vs PlusCal

If you will research this topic further, there is one other thing to clarify. Together with TLA+, you can often find another term: PlusCal. What is going on there?

PlusCal is a language for writing algorithms - mostly concurrent and distributed ones. It is meant to replace pseudocode with precise, testable code. PlusCal looks like a simple toy programming language, but with constructs for describing concurrency and indeterminacy. It is infinitely more expressive than any programming language because any mathematical formula can be used as a PlusCal expression.

A PlusCal algorithm is translated into a TLA+ model that can be checked with the TLA+ tools. Because it looks like a programming language, most engineers find PlusCal easier to learn than TLA+. It seems like a programming language, PlusCal cannot structure complex models as TLA+ can.

Historical Context of TLA+

Last but not least - let’s talk a bit about its history.

TLA+ originates from a purely mathematical idea called temporal logic. Leslie Lamport’s search for a practical method of specification for distributed systems resulted in the 1983 paper “Specifying Concurrent Programming Modules”, which introduced the idea of describing state transitions as boolean-valued functions of primed and unprimed variables.

Work continued throughout the 1980s, and Lamport began publishing papers on the temporal logic of actions in 1990. However, it was not formally introduced until “The Temporal Logic of Actions” was published in 1994. TLA enabled the use of actions in temporal formulas, which, according to Lamport:

It provides an elegant way to formalize and systematize all the reasoning used in concurrent system verification.

Leslie Lamport

TLA specifications mostly consisted of ordinary non-temporal mathematics, which Lamport found less cumbersome than a purely temporal specification. TLA provided a mathematical foundation to the specification language TLA+, introduced with the paper “Specifying Concurrent Systems with TLA+” in 1999. Later that same year, Yuan Yu wrote the TLC, a model checker for TLA+ specifications.

TLC was used to find errors in the cache coherence protocol for a Compaq multiprocessor, and it is extensively used to check other specifications written in TLA+ directly or that are translated from PlusCal to TLA+ which offers a better entry point for the regular programmers. It is worth mentioning that TLC works only at the TLA+ level, so it must be a way for translating a PlusCal to the TLA+ to check the desired models.

Okay, but what about AWS?

Amazon Web Services (AWS) has been using TLA+ since 2011. In April 2015, six engineers published an article describing how it was introduced to AWS and how it was used there. Here is an abridged version of it:

https://lamport.azurewebsites.net/tla/amazon-excerpt.html

Here are the critical insights listed in the article:

- Formal methods find bugs in system designs that cannot be found through any other technique they know of.

- Formal methods are surprisingly feasible for mainstream software development and give a good return on investment.

- At Amazon, formal methods are routinely applied to the design of sophisticated real-world software, including public cloud services.

This article provides an excellent picture of TLA+ use in an industrial environment, describing its benefits and what it can’t do.

However, that’s not the only resource about it. James Hamilton (VP and Distinguished Engineer at AWS) wrote a blog post about this approach, and Tim Rath (one of the co-authors of the whitepaper) gave a lecture about the use of TLA+ at AWS. I strongly recommend the talk, but we will return to it. Additionally, there are two additional whitepapers done by the same crew as the cited one.

Here is what Chris Newcombe, the lead author of the article, has written elsewhere:

TLA+ is the most valuable thing that I’ve learned in my professional career. It has changed how I work by giving me an immensely powerful tool to find subtle flaws in system designs. It has changed how I think by giving me a framework for constructing new kinds of mental-models, by revealing the precise relationship between correctness properties and system designs, and by allowing me to move from `plausible prose’ to precise statements much earlier in the software development process.

Chris Newcombe

Huh. So TLA+ is not that hard to learn. I also found this bit inside the paper where Amazon engineers report that:

Engineers from entry-level to principal have been able to learn TLA+ from scratch and get useful results in two to three weeks, in some cases in their personal time on weekends and evenings, without further help or training.

Chris Newcombe paraphrasing AWS Engineers feelings about TLA+

Interesting. Let’s see then how it helped!

TLA+ use cases at AWS

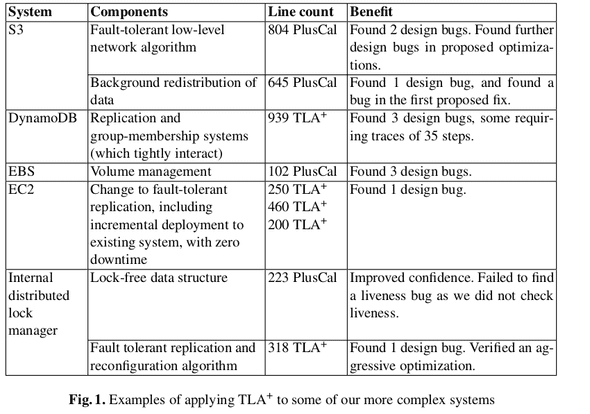

One of the articles contains the following table:

Now, we are talking! An exciting list of exact implementations (including LoC for models in PlusCal and/or TLA+). This list includes what kind of bugs were found by formal methods.

Outside of technical description of those bugs (some were really complicated and subtle, possibly not able to test and detect any other way) and thoughts about adopting that tool in AWS. I found one fascinating piece.

In one of those papers, they have summarised the main traits they’ve looked for. Everything regarding a choice between TLA+ and the rest of the tools. It is fascinating for me as I have some experience with other tools and alternatives (e.g., Property-Based Testing, Coq or Jepsen) and direct comparison is a thing that gave me additional insight:

- Handle Very Large, Complex, or Subtle Problems.

- TLA+ and other competitors are successfully used in the industry. Still, only the first one provides the most comprehensive way of handling distributed systems and materials and whitepapers about the outcomes. The model checker is a very stable and performant - evaluating model with 31 billion states took in 2014 approximately 5 weeks on a single EC2 instance with 16 virtual CPUs, 60 GB RAM, and 2 TB of local SSD storage.

- Minimize Cognitive Burden.

- They needed a small language that is understandable yet expressive for use in distributed systems areas, e.g., operators that allow you to express the examined system’s liveness properties.

- Yet, many alternatives impose many new and esoteric requirements on writers, e.g., Coq type system (oh yeah, tell me more about that). TLA+ is untyped as the type system is yet another external constraint that needs to be aligned and checked.

- High Return on Investment.

- They’ve searched for a tool that can handle all types of problems and provide a quick learning experience to improve time to market and easily focus on real issues.

After reading the papers, I did not stop. I have dug deeper and found another gem. It’s a talk done by Tim Rath (I mentioned it above, he is one of the co-authors of the original paper) with the exciting title “The Evolution of Testing Methodology at AWS: From Status Quo to Formal Methods with TLA+” from 2015, which is available here.

And it provides even more information about DynamoDB work, where Tim was a part of the team and even more details on the comparison to the generative testing (different name on property-based testing) or process clusters technique (which is very similar to what Jepsen does). Long story short: any mentioned alternative to TLA+ did not provide enough confidence for them.

He also made two significant observations.

One about Test Adequacy Criteria. Most projects use Code Coverage currently, and it provides valuable information, but it’s based on weak criteria. If you want to perform more exhaustive testing, it requires time and experience yet still focuses more or less on happy path scenarios. And you are not testing the design, which is the essential part of TLA+.

The second one is important too. Testing and Quality is often not as sexy as other topics, but it’s definitely providing business value and equals or overcomes the time spend on development and implementation. I think we often forget about it, especially when standing on the shoulders of giants.

Summary

For completeness - here is a list of all papers that I have found on this topic:

- Why Amazon Chose TLA+.

- How Amazon Web Services Uses Formal Methods.

- Abridged Version by Leslie Lamport.

- Use of Formal Methods at Amazon Web Services.

- Side note: this has the same premises and conclusions, so I recommend reading the other two.

Okay, those examples are 4-5 years old? Do you have something more recent?

Yes, I do:

- Not precisely related to the AWS, but there are plenty of talks. There is even a dedicated conference about TLA+ called - not surprisingly - TLA+ Conf (talks are from 2019).

- Leslie Lamport’s book is updated regularly. New books are arriving, e.g., Hillel Wayne’s book about Practical TLA+.

- More and more blog posts are available. It shows an interest in the communities about this topic. I have mentioned a few above and below in the references.

Why should I care?

I do not know, but if you are still here, it means that you care about the quality of software. The one key takeaway from that journey is that we need to think a bit more about quality and outside of the box that we currently are.

Of course, I am sure that you do not need formal methods in most cases. I am pretty sure that there are a few companies in the world that need that daily. Yet, the software is becoming more distributed (ergo: complicated) year over year, and there are plenty of critical areas that are relying on it. Maybe in some cases, we should think in the first-place about correctness, planning, and formal verification.

And even if you are not doing such stuff, it is worth knowing that there are such techniques out there and many similar others (e.g., property-based testing).

I find incredibly refreshing the talk done by Tim Rath, especially considering that he refers to stuff made directly from academia and applying it in the industry with tremendous success. Being brutally honest, I am pretty sure that is not how we imagine research work nowadays.

References

- Practical TLA+ by Hillel Wayne

- TLA+ - Wikipedia

- The TLA+ Home Page

- Learn TLA+ (amazing site, I strongly recommend it)

- Challenges in Designing at Scale: Formal Methods in Building Robust Distributed Systems

- The Evolution of Testing Methodology at AWS: From Status Quo to Formal Methods with TLA+

- Introduction to TLA+ Model Checking in the Command Line

- Using TLA+ to Model Cascading Failures

- tlaplus/tlaplus - GitHub

- TLA+ Cheatsheet

- Specifying Systems in TLA+ by Leslie Lamport